Intro

Few things are more demotivating for a security researcher than watching a carefully documented vulnerability report languish in triage for months, buried under a wave of AI-generated submissions. Agents are the future of vulnerability research, but a sharp bifurcation in capabilities has formed across the ecosystem. Expert-guided AI systems are successfully unearthing deeply complex zero-days, while mass adoption is simultaneously flooding maintainers with thousands of garbage reports.

Unlike AI-generated issues or pull requests, which are public and can be evaluated and closed quickly by the broader community, security vulnerabilities require careful inspection, independent verification, private triage, and a thorough understanding of a project’s specific threat model, trust boundaries, and architectural nuances. The human labor and agent resources required to independently verify and disprove these submissions has become unsustainable. This post explores the patterns and incentives I’ve observed behind this asymmetry.

A Verification Checklist for AI Slop Vulnerability Reports

I’ve seen many researchers design systems to filter AI-generated vulnerability reports with linguistic markers among other deterministic methods. While some deterministic checks are useful in a multi-layered system, this approach fails to distinguish between presentation and technical substance, sacrificing valid signal to manage volume. As AI-assisted writing becomes the industry standard, AI-speak is becoming indistinguishable from standard professional communication. Instead of penalizing reports for their linguistic style, triage teams must prioritize technical hygiene. These reports fail in classifiable ways, for reasons that map directly to how models are trained and what context they’re missing. I’ve been running agentic security pipelines and reviewing submissions to public bounty programs, and the same failure modes keep showing up. What follows is a rough verification model I’ve landed on toward improving agentic triage pipelines that doubles as a taxonomy to describe the nature of AI slop. A report that fails at any check is classified and closed without progressing further, so the cheapest filters run first and the most expensive reasoning is reserved for reports that survive every prior check.

Check 1: Does the Reported Code Exist?

Sometimes the vulnerability report finding doesn’t just lack impact or context. Models fabricate code paths, parameters, function signatures, and endpoints that aren’t present in the codebase. A report might describe a detailed exploit chain against an API route that was never defined, or reference a function argument that the code doesn’t accept. The model pattern-matched on what a vulnerability in this kind of project would typically look like and generated a plausible but fictional finding. Verification includes checking whether the functions, endpoints, parameters, and code paths described in the report are present in the current codebase. This is the cheapest possible filter with grep, AST lookup, or similar.

Check 2: Is the Vulnerability Class Correct?

The code is real, but does it actually exhibit the behavior the report claims? The difference between this check and the ones that follow is that the model didn’t need to trace callers or understand composition. It needed to read the code directly in front of it more carefully.

A report flags SQL injection because it sees string concatenation near a database call, but the concatenation builds a log message and the query on the next line is parameterized. A report flags code injection because it sees eval(), but the argument is a hardcoded string, not user input. A report flags an open redirect because it sees redirect(), but the argument is an internal route name, not a URL. In each case, the answer was right there in the same function the model was already analyzing. These near-misses take longer to triage than outright fabrications because the triager has to read the same code the model should have to confirm the finding doesn’t hold.

Check 3: Is the Finding Current?

The code is real and the classification is correct, but has the issue already been resolved? This check catches two failure modes that share the same root cause but require different verification methods.

Stale findings occur when models regurgitate patched vulnerabilities from their training data. LLM training data often includes vulnerability details and technical writeups describing issues in the very systems researchers are exploring. Without explicit tooling or context to verify the current state of a codebase, models default to their training data and “rediscover” vulnerabilities that have already been fixed. The result is reports that present known, resolved issues as novel findings. Verification requires checking commit history, changelogs, or prior CVE records.

It’s worth noting that deliberately providing models with context about previous CVEs and writeups is a valid tactic for discovering bypasses or similar vulnerability classes, but that is a targeted research strategy, separate from the problem of a model regurgitating stale findings. Here is an example from curl, where the submitter’s LLM rehashed CVE-2024-11053.

Dependency and version confusion occurs when models report known CVEs against dependencies without verifying whether the installed version is actually vulnerable. Prototype pollution in lodash is one that I see very frequently in my own research. A project calls _.merge with user-controlled input, and the model flags it as prototype pollution because older versions of lodash were susceptible. But the vulnerability was patched in an earlier stable version, and the project pins a current version where _.merge is safe. The model recognized a function that was once dangerous and a usage pattern that would have been exploitable, but did not check whether the version in use still has the vulnerability. The same pattern shows up with other npm ecosystem staples where the risky functions still exist and are still called, but the underlying bugs that enabled the sinks were fixed years ago. Verification requires checking the lockfile against the CVE’s affected version range.

Check 4: Is the Vulnerable Code Reachable?

The finding is potentially real, correctly classified, and unpatched, but can an attacker actually reach it? This check requires call graph analysis to trace from the public API surface inward and determine whether any external caller can invoke the flagged function with unsanitized input. Models evaluate functions in isolation without understanding how they’re composed, and this is where the verification cost jumps.

I’ve seen reports against libraries like OpenSSL that identify vulnerabilities in internal functions that are never part of the public API surface. Upstream callers within the library handle validation and sanitization before these functions are ever invoked. The model lacked the context or instruction to trace the call graph from the public API inward, and the researcher did not verify whether the function was actually reachable by external callers. Here is an example from curl, which flags a safe usage of strcpy.

The model analyzes a function without understanding who calls it, how, and with what guarantees. Reports that die at this check are expensive to close because the triager has to do the call graph work the model skipped.

Check 5: Does Exploitation Cross a Meaningful Security Boundary?

The finding is potentially real, current, and reachable with attacker-controlled input, but does it matter? This is the most expensive check because it requires understanding the project’s design intent, deployment context, and what constitutes actual harm. It contains two distinct failure modes.

Missing threat model reports flag intended behavior as a vulnerability. Models can identify flaws in business logic in ways that traditional SAST and DAST tools have failed for years. However, business logic requires stateful understanding and contextual awareness of what a user should and should not be allowed to do. Without an understanding of the application’s threat model, models treat all configurable values as attacker-controlled inputs without understanding the privilege level required to set them, flagging environment variables and server-side configuration as entry points for injection when these values are only accessible to operators with direct access to the server. They identify server-side request functionality operating as designed and report it as an SSRF, report that an admin endpoint lacks rate limiting, or that an internal API does not enforce authentication that is handled at a higher layer.

The same failure extends to API contracts. Many projects explicitly delegate responsibility to developers to use their software securely. A library might document that callers must sanitize inputs before passing them to an unsafe function. The function is reachable, and a caller can pass unsanitized input to it, but the model flags the function as vulnerable without considering the contract. The vulnerability exists only if the contract is violated, which is a bug in the calling code, not in the library.

In each case, the model identified something real but could not distinguish intended behavior from a security flaw. Verification requires the threat model, architecture documentation, or deployment context.

Missing security impact reports flag unintended behavior that doesn’t provide attacker value. Models fixate on certain vulnerability classes without evaluating whether the finding carries a tangible security impact. An agent will report a regular expression denial of service (ReDoS) by catastrophic backtracking when the input is length-limited, submit a self-XSS report where a user can only execute scripts against themselves, or flag a missing rate limit on a public, read-only endpoint. These are technically real observations, potentially bugs, but they don’t cross the bar for a vulnerability because the security impact is negligible or nonexistent. Here’s an example from curl, where the report suggests sending two requests to the server in a single curl command, which can also be accomplished with other curl command line options. There is no security impact.

The distinction matters for pipeline design. Missing threat model failures are answerable from documentation like a published SECURITY.md that defines trust boundaries and scoping lets a triage agent resolve these without human intervention. Missing security impact failures require judgment about what constitutes meaningful harm in context, which is harder to automate and often falls back on human review.

Check 6: Is the Proof of Concept Legitimate?

Check 6 is the final evidentiary check, even though PoC review may surface earlier failures in reachability and impact. The PoC might have “passed” before check 1, but fabricated PoCs are the natural evolution of slop in an ecosystem that starts requiring reproduction as a submission check. The code exists, the classification is correct, the finding is current, the code is reachable, and the impact is meaningful. The final check asks whether the proof of concept actually demonstrates what it claims to, or whether the model manufactured exploitability that doesn’t exist in the real application.

Models that are instructed to produce working PoCs will sometimes do whatever it takes to make them pass. When a function isn’t exploitable as written, the model doesn’t always assess that. Instead, it rewrites the function, wraps it in a harness that strips validation, hardcodes attacker-controlled input where the application would sanitize it, or reconstructs the call chain with the safety checks removed. The result is a PoC that technically executes and demonstrates the vulnerability class described in the report, but against code that doesn’t match what’s actually deployed. The model solved a different problem than the one it was asked to solve. This check requires assessing the PoC’s assumptions against the actual codebase and confirming that the exploit path the PoC exercises is the same one the application exposes.exploitation.

This is distinct from phantom vulnerabilities at Check 1, where the code doesn’t exist at all. Here, the code is real, but the PoC misrepresents how it runs. It’s also reasonable to kick a valid report back to a researcher to fix if they provide a bogus PoC.

Maintainers Have Responsibilities Too

A few of these failure modes aren’t purely the responsibility of researchers to mitigate. Code composition failures (e.g., missing call context) and outright hallucinations (e.g., stale findings) can typically be attributed to context management and model capacity failures on the part of the researcher’s system design or lack thereof. However, security context failures (e.g., missing threat model or missing security impact) can often be partially mitigated with proper documentation. When a model misidentifies a threat model or flags intended behavior as a vulnerability, it’s often because the project never documented its threat model or API contract in the first place. These are the conversations that researchers would have with maintainers long before LLMs. The most successful projects solved this issue many years ago by providing clear-cut security guarantees through API contracts in their documentation. Maintainers who publish a clear SECURITY.md that defines the threat model, scoping boundaries, trust assumptions, and what classes of issues they do and don’t want reported, give both human researchers and LLMs something to work against. Some great examples are the bug bounty submission guidelines of popular repositories.

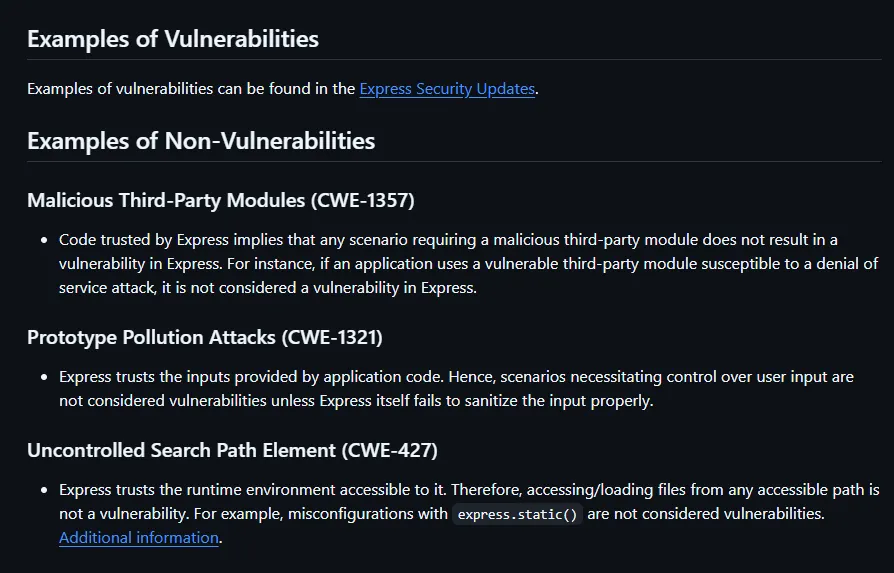

The Express framework provides a great example of threat model documentation.

None of this solves the volume problem. A well-documented threat model won’t stop someone from bulk-submitting unverified findings, but it does make the triage faster, the rejections clearer, and the valid reports more precise. The projects drowning in slop are often the ones that left interpretation up to the submitter, and that gap is exactly what many researchers exploit.

Exploiting Bug Bounty Programs

Bug bounty platforms like HackerOne and Bugcrowd were built on the assumption that the cost of producing a vulnerability report reflects a baseline of effort and understanding. They formalized this into an economy where researchers are paid for valid findings, and the payout structure incentivizes high-quality work. That economy folds when the cost of generating a professional-looking report drops to zero. If generating a report takes seconds and even a small percentage result in payouts, the optimal strategy is volume. Submit a hundred reports and see what sticks. A 5% hit rate is more profitable than the effort of manually verifying five findings.

Earlier this year, I selected a few open-source AI repositories for in-depth threat modeling and code security review. In this process, I found huntr.com, a popular open-source bug bounty program focused on AI projects. The platform also provides LLM wrappers for identifying vulnerabilities and generating “zero-shot” vulnerability reports for bounties. Alongside this financial incentive, I’ve found that AI projects, likely due to the user base, are significantly more prone to AI slop.

Take the MLFlow repository, for example. The repository received 31 vulnerability reports on March 16th alone, of which every single one was AI slop. Looking at issues on the repository itself, there’s ~100 issues specific to vulnerability reports, most of which have been rejected as slop.

This problem extends to every other bug bounty platform and program, but the real cost is visible in the individual projects that have been forced to respond. Since 2019, curl had run one of the more successful open-source bug bounties on HackerOne, paying out over $90,000 for 81 genuine security flaws. By mid-2025, genuine vulnerabilities had dropped to roughly 5% of submissions. In January 2026, they shut down the program entirely. None of the AI-assisted reports submitted to curl over the previous six years had identified a genuine vulnerability (Death by a thousand slops). Node.js imposed restrictions after receiving more than 30 low-quality reports in a single month, eventually blocking new researchers from submitting entirely (New HackerOne Signal Requirement for Vulnerability Reports).

The pattern across these cases is the same. The platforms externalize the cost of triage to maintainers. Maintainers are overwhelmingly unpaid volunteers. The incentive structure rewards submission volume with no proportional cost for invalid reports. In recent months, platforms like HackerOne and Bugcrowd have leaned heavier into reputation systems, report quality scores, cooldown penalties, and AI-assisted triage filters, but the industry is still looking for a solid solution.

Manufactured Expertise and Misguided Vigilance

Bug bounty payouts are not the only currency in vulnerability research. CVE credits, GitHub Security Advisory acknowledgments, and the public visibility that comes with them have become career infrastructure. GitHub’s Private Vulnerability Reporting process is particularly exposed. Any researcher can submit a report to any public repository that has the feature enabled, and the maintainer receives it with no tooling to assess the submitter’s track record or the report’s validity. Unlike bounty platforms, which have at least begun implementing reputation checks and quality filters, GitHub’s advisory process has no equivalent friction. For a solo maintainer who has never dealt with a formal vulnerability disclosure, a confident, well-structured report describing a critical exploit chain is difficult to evaluate and even harder to reject.

The rise of autonomous agents has compounded the problem. Tools like OpenClaw allow anyone to set up an AI agent that scrubs open-source projects for potential vulnerabilities and submits reports without human review. OpenClaw’s own team has received a wave of reports that flagged intended behavior as vulnerabilities, the same missing-threat-model failures described above. Their security page now requires vetted reports with exact vulnerable paths, reproducible PoCs against the latest version, and demonstrated impact tied to the project’s documented trust boundaries (OpenClaw SECURITY.md).

The problem has also drawn institutional attention. A coalition including OpenAI, Anthropic, AWS, Google, Microsoft, and GitHub recently pledged $12.5 million to the OpenSSF and Alpha-Omega to support more sustainable open-source security. These are meaningful first steps, but they remain downstream responses to a problem whose immediate cost is still absorbed by maintainers (Linux Foundation Announces $12.5 Million in Grant Funding from Leading Organizations to Advance Open Source Security).

The Token Tax of Defending the Triage Pipeline

The software security ecosystem cannot rely on self-regulation. The only scalable defense for maintainers drowning in unverified reports is to fight automation with automation. For $20 a month, a speculative researcher can use frontier models to generate hundreds of complex, articulate, and structurally flawless reports. To automatically disprove a single one of those reports, a maintainer’s triage agent needs equivalent or better reasoning ability with significantly more context. Generating a plausible-looking vulnerability report requires only local context: a function, a pattern, a plausible narrative. Disproving it requires full semantic context: the call graph, the version history, the threat model, the API contract. The asymmetry of effort that began as a human time problem becomes a token economics problem. We are effectively shifting the cost of spam from maintainers’ free time to their actual bank accounts.

Open-source projects rarely have the budget for frontier-tier triage. They run smaller models (Gemini Flash, quantized local models) that may lack the context window and reasoning capability to recognize a complex Opus-generated exploit chain for the phantom vulnerability that it is. The result is that invalid reports slip through automated filters and fall back on human review, increasing the potential for unwarranted security advisories and requiring additional effort to untangle the model’s confusion.

This burden extends to bounty platforms themselves as they deploy their own triage pipelines and absorb the development, compute, and token costs required to filter low-quality reports. Even direct subsidies from model providers, like Anthropic’s recent offer of Claude Max access to select open-source maintainers, are stopgaps that don’t address the underlying economics. The best frontier models used for security research, including those with guardrails removed, are available to a only handful of well-funded companies. When those companies point their models at open-source projects, volunteer maintainers absorb the real cost of defending against them.

Conclusion

The vulnerability reporting and triage pipeline is not a unique problem. It is part of a broader wave of AI slop hitting pull requests, issue trackers, documentation contributions, and code reviews across open source. Security is just where the cost hits harder because every report demands private, expert attention before it can be dismissed.

This doesn’t mean agentic security research is headed in the wrong direction. Models with proper context management, codebase-aware tooling, and threat model integration are already surfacing findings that would take human researchers weeks. The failure modes cataloged here describe what breaks when agents are deployed without those foundations, and the tooling is improving fast. A year from now, this problem will look fundamentally different.

What won’t resolve itself is the structural gap between researchers and maintainers. Reputation systems and quality scores are no longer sufficient friction. Platforms need to give maintainers enforceable controls like mandatory reproduction environments, automated pre-screening against documented threat models, and hard checks that reject submissions before they ever reach a human. The platforms that survive this transition will be the ones that treat triage cost as a design constraint, not an externality absorbed by volunteers.

I’d like to follow up this post with a “How to Implement A Triage Pipeline.”